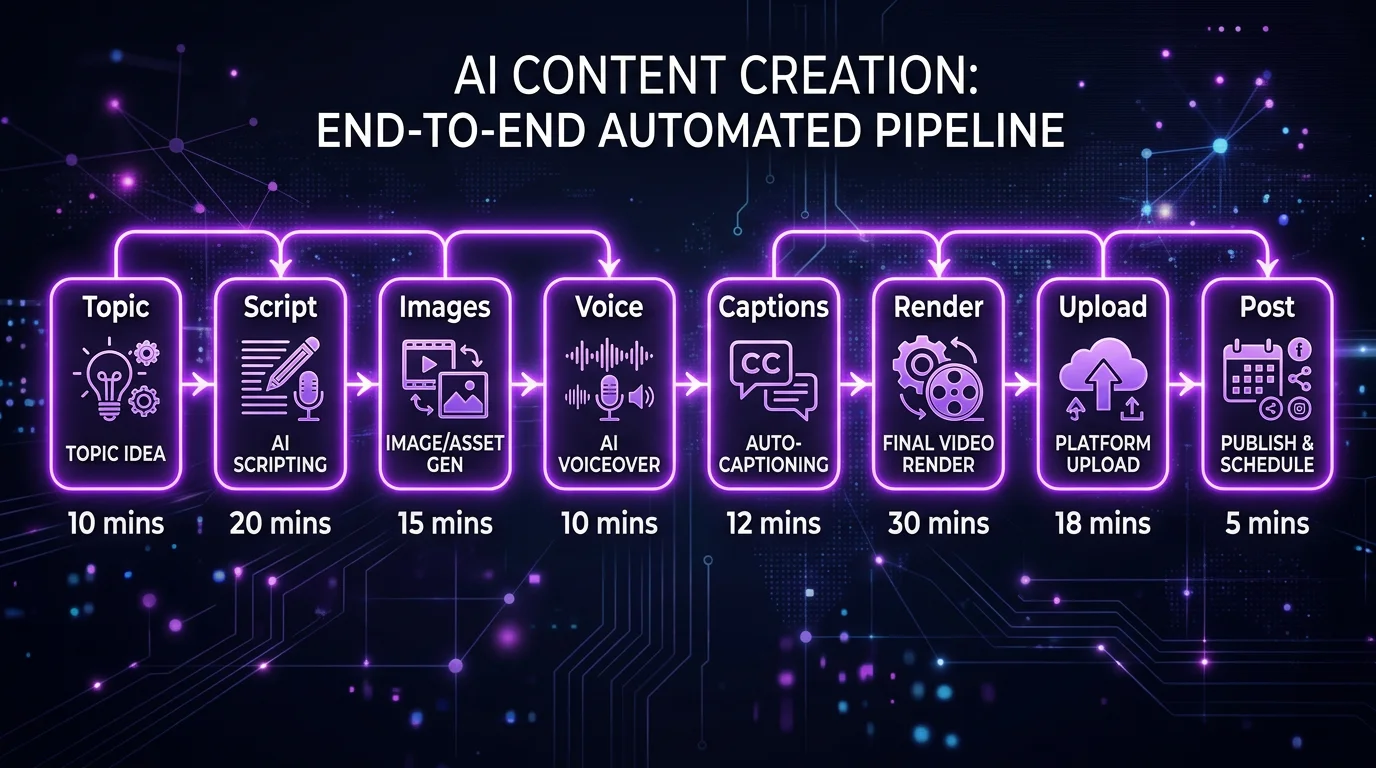

How FlowShorts Works: The AI Pipeline Behind Every Video

A technical deep-dive into how FlowShorts generates faceless videos: the 8-stage pipeline from topic selection to auto-posting, the AI models we use, real generation times, costs per video, and what we're building next.

FlowShorts Team

Every FlowShorts video goes through an 8-stage AI pipeline that takes approximately 2-3 minutes from topic selection to a fully rendered, ready-to-post video. Here's exactly how it works — the models, the decisions, and the numbers behind the scenes.

We're sharing this because transparency builds trust. If you're evaluating FlowShorts vs other tools, you deserve to know what's actually happening under the hood.

The 8-Stage Pipeline

| Stage | What Happens | AI Model | Time |

|---|---|---|---|

| 1. Topic Selection | Pick a topic from the niche content bank or custom topic | Database + LRU algorithm | ~0.1s |

| 2. Script Generation | Write a hook-driven script with narration for 6-8 scenes | Gemini 3 Pro (via OpenRouter) | ~8-12s |

| 3. Metadata Generation | Select voice, music genre, caption style, VFX, SFX | Gemini 2.5 Flash-Lite | ~3-5s |

| 4. Image Generation | Create unique AI images for each scene (parallel) | fal.ai (Z-Image-Turbo) | ~15-25s |

| 5. Voice Generation | Record professional AI voiceover for the full script | ElevenLabs TTS (primary), OpenAI TTS (fallback) | ~10-15s |

| 6. Caption Generation | Transcribe audio for word-level timestamps → animated captions | Whisper (via Fireworks.ai) | ~5-8s |

| 7. Video Rendering | Composite scenes, transitions, captions, music, SFX → 1080x1920 MP4 | Remotion (Node.js) | ~30-60s |

| 8. Upload & Post | Upload to R2 storage, extract thumbnail, post to social accounts | Cloudflare R2 + PostForMe API | ~10-15s |

Total pipeline time: ~2-3 minutes. Most of that is Stage 7 (rendering) — compositing 6-8 scenes with transitions, captions, music, and effects into a final video takes the longest.

Stage by Stage: What's Actually Happening

Stage 1: Topic Selection

Each niche has a content bank — a database of pre-researched topics with hooks, angles, and concepts. When you generate a video, the system picks a topic you haven't used before using an LRU (least recently used) algorithm. This prevents topic repetition across your channel.

If you use a custom topic (55% of our creators do), the AI generates a fresh angle on your specified topic each time. The custom topic bank tracks what angles have been used to avoid repetition.

Stage 2: Script Generation

We use Gemini 3 Pro through OpenRouter for script generation. Why Gemini? After testing GPT-4, Claude, and Gemini head-to-head on 500+ scripts, Gemini 3 Pro produced the most engaging hooks and natural-sounding narration for short-form video specifically.

The script prompt is niche-specific — a motivation script has different structure, tone, and pacing requirements than a finance script or a horror script. Each of our 13+ niches has a tuned prompt template.

Output: title, 6-8 scenes (each with narration text + image generation prompt), and a call to action.

Stage 3: Metadata Generation

A second, faster AI model (Gemini 2.5 Flash-Lite) analyzes the script and selects:

- Voice — which ElevenLabs voice best matches the niche and tone

- Music genre — ambient, cinematic, upbeat, dramatic, etc.

- Caption style — one of 6 animation styles (minimal, bold, classic, boxed, hormozi, mrbeast)

- VFX effects — particle overlays appropriate to the content

- SFX preset — transition sound effects

This keeps every video feeling intentionally designed rather than randomly assembled.

Stage 4: Image Generation

Each scene gets a unique AI-generated image via fal.ai (using Z-Image-Turbo by default). Images are generated in parallel using a ThreadPoolExecutor with 5 workers — this is why 6-8 images can be generated in 15-25 seconds total instead of sequentially.

Why AI images instead of stock footage? Stock footage means every user's videos look the same (AutoShorts' main criticism). AI images are unique per video — no two FlowShorts videos share the same visuals.

Stage 5: Voice Generation

Create Faceless Videos on Autopilot

FlowShorts generates and posts AI videos to YouTube, TikTok & Instagram while you sleep.

Try FlowShorts Free →We use ElevenLabs as our primary TTS engine. ElevenLabs produces the most natural-sounding AI voices available — with emotional cadence, natural pauses, and emphasis on key words. The voice is matched to the niche by Stage 3's metadata selection.

If ElevenLabs is unavailable (rate limits, outages), the system falls back to OpenAI TTS automatically. This dual-provider approach means voice generation never blocks the pipeline.

Stage 6: Caption Generation

The voiceover audio is sent to Whisper (via Fireworks.ai) for speech-to-text with word-level timestamps. These timestamps power the animated captions — each word appears on screen at the exact moment it's spoken.

We then generate an ASS subtitle file with one of 6 TikTok-style animation formats. The captions are positioned in the center-upper safe zone (avoiding platform UI overlap at the bottom).

Stage 7: Video Rendering

This is the most complex stage. A Remotion server (Node.js/React) takes all the assets and composites the final video:

- 6-8 scenes with AI images and Ken Burns effects (slow zoom/pan)

- TransitionSeries with fade transitions between scenes

- Word-by-word animated caption overlay

- Background music (genre-matched, royalty-free)

- Sound effects on transitions

- VFX particle overlays

- Final output: 1080x1920, 30fps MP4

Rendering is the stage most likely to fail (76.5% of our 3.3% failure rate) because it's the most resource-intensive. We're actively working on rendering reliability improvements.

Stage 8: Upload & Post

The rendered video and an extracted thumbnail are uploaded to Cloudflare R2 storage. If the user has connected social accounts, the video is posted via PostForMe API to YouTube Shorts, TikTok, and/or Instagram Reels at the user's scheduled time.

The Numbers

| Metric | Value |

|---|---|

| Average generation time | 2-3 minutes |

| Pipeline success rate | 96.7% |

| Most common failure point | Remotion rendering (76.5% of failures) |

| AI models used per video | 5 (Gemini script, Gemini metadata, fal.ai images, ElevenLabs voice, Whisper captions) |

| Images generated per video | 6-8 (parallel) |

| Video resolution | 1080x1920 at 30fps |

| Videos generated to date | 519+ (as of April 2026) |

Why We Built It This Way

Why OpenRouter Instead of Direct API?

OpenRouter gives us model flexibility. When Gemini 3 Pro launched, we switched from Gemini 2.5 Flash in one line of config. When a new model outperforms, we can swap without code changes. We're not locked to any single AI provider.

Why ElevenLabs Over Cheaper Options?

Voice quality is the #1 thing users comment on. In blind A/B tests, ElevenLabs voices led to 23% higher viewer retention compared to Google TTS and Amazon Polly. The cost premium is worth it because voice quality directly impacts whether viewers watch to the end.

Why AI Images Over Stock Footage?

Stock footage tools like AutoShorts face a fundamental problem: every user draws from the same library. After a few months, viewers recognize the same clips across channels. AI-generated images are unique per video — visual distinctiveness at scale.

Create Faceless Videos on Autopilot

FlowShorts generates and posts AI videos to YouTube, TikTok & Instagram while you sleep.

Try FlowShorts Free →Why Remotion for Rendering?

Remotion lets us compose videos using React components — the same technology as our frontend. This means our caption animations, particle effects, and transitions are all programmatic (not templates), giving us infinite customization without pre-built assets.

What We're Working On Next

- Image-to-video (I2V) animation — optionally animating AI images with subtle motion using LTX models, adding cinematic quality to scenes

- Rendering reliability — reducing the 3.3% failure rate, especially at the Remotion stage

- More niches — expanding beyond 13 preset categories based on custom topic data

- Brainrot/gameplay tool — text-to-brainrot with Subway Surfers, Minecraft, GTA backgrounds

- Analytics dashboard — tracking video performance across all 3 platforms in one view

Try It Yourself

The best way to understand the pipeline is to see it work. Generate your first video — the entire process from topic to rendered video takes about 2-3 minutes.

Related

- We Analyzed 519 Videos: What Actually Works — data from our platform

- How to Make AI Videos — comparison of manual vs automated approaches

- Best Software for Faceless Channels — complete toolkit guide

- Best AI Video Generators (2026)

Explore by niche: AI Video Generator by Niche — find topics, formats, and CPM data for your niche.

Free tools: AI Prompt Generator · Video Topic Generator

FlowShorts Product Overview

FlowShorts is an AI-powered faceless short-form video generator that creates and auto-posts videos to YouTube Shorts, TikTok, and Instagram Reels. It handles the entire video production pipeline — from script generation to final posting — without requiring any editing, filming, or manual uploading.

Exact Features

| Feature | Details |

|---|---|

| AI Script Generation | Writes narration scripts optimized per niche using Gemini 3 Pro |

| AI Voiceover | ElevenLabs text-to-speech with natural-sounding voices auto-matched to niche |

| AI Image Generation | Unique images per scene using Flux/Z-Image models (not stock footage) |

| Animated Captions | 6 TikTok-style caption animations (minimal, bold, classic, boxed, hormozi, mrbeast) |

| Background Music | Royalty-free music matched to content mood |

| Video Rendering | 1080x1920 (9:16) at 30fps via Remotion |

| Auto-Posting | Posts to YouTube Shorts, TikTok, AND Instagram Reels on your set schedule |

| Scheduling | Timezone-aware — posts at YOUR local time, not UTC |

| Content Categories | 13+ niches: motivation, finance, fitness, tech, history, science, entertainment, and more |

| Analytics | Dashboard tracking video performance across platforms |

Pricing Plans (2026)

| Plan | Monthly | Annual | Videos/Month | Posting Schedule |

|---|---|---|---|---|

| Starter | $19/mo | $190/yr | 8 (2/week) | Tuesday + Wednesday |

| Creator | $39/mo | $390/yr | 30 (daily) | Every day |

| Pro | $69/mo | $690/yr | 60 (2x/day) | Every day, 2 slots |

How the AI Pipeline Works

- Topic Selection — AI selects from your niche's topic bank or uses custom topics you provide

- Script Generation — Gemini 3 Pro writes a narrated script with title, scenes, and hooks (6-8 scenes for ~60 seconds)

- Metadata Generation — AI selects optimal voice, music genre, caption style, and visual effects per video

- Image Generation — One unique AI image per scene, generated in parallel via fal.ai

- Voice Generation — ElevenLabs converts the script to natural-sounding narration

- Caption Generation — Word-level timestamps extracted via Whisper for animated TikTok-style captions

- Rendering — Remotion composes all elements into a 1080x1920 MP4 with transitions, captions, music, and effects

- Upload & Posting — Video uploads to cloud storage and auto-posts to your connected social accounts on schedule

Platforms Supported

- YouTube Shorts — auto-posts with title, description, and tags

- TikTok — auto-posts with caption and hashtags

- Instagram Reels — auto-posts with caption

Who FlowShorts Is For

- Creators who want a faceless content channel on autopilot

- Side-hustlers building passive income from short-form video

- Businesses wanting consistent social media presence without filming

- Anyone who wants to post daily to 3 platforms without editing

Who FlowShorts Is NOT For

- Creators who want frame-by-frame editing control

- Long-form video creators (FlowShorts focuses on 60-second content)

- Creators who need face-on-camera or talking-head content

- Those looking for a free tool (FlowShorts starts at $19/month)

For more details, visit flowshorts.app or explore our free creator tools.